Palantir is not a household name and that is by design. It does not sell phones, apps, or social networks. It does not ask for your trust or your consent. Yet behind the scenes, Palantir’s software helps governments and powerful institutions see, sort, track, predict, and act on human behavior at a scale that would have been unthinkable a generation ago. It sits quietly inside intelligence agencies, military commands, police departments, health systems, and corporate back offices, turning raw data into decisions that affect who is watched, who is flagged, who is stopped, and who is left alone. This is not science fiction, and it is not conspiracy. It is a business model and its consequences reach far beyond the people who have ever heard the company’s name.

Whitney Webb gives a history of Palantir. Palantir essentially started as a DARPA project. It was going to completely destroy our constitutional protections so it was quietly taken out of government and rebranded as a private company to do the same thing

What is Palantir?👇🏻

Who founded Palantir - and where they came from

Palantir Technologies was founded in 2003, not by random programmers, but by people already deeply connected to power, money, and intelligence networks.

The key founders were:

Peter Thiel

Peter Thiel is the most important name behind Palantir.

Before Palantir:

He co-founded PayPal

Became a billionaire very early

Built close relationships in Silicon Valley, Wall Street, and U.S. political circles

Peter Thiel has long believed that democracy is inefficient and that society should be run by “competent elites,” not by mass public opinion. Palantir was his answer to a problem he openly talked about after 9/11:

how to give governments more power to detect threats before they happen, without public oversight getting in the way.

He’s behind the scenes of plans to remake America from mass surveillance to authoritarian policy blueprints. His companies profit from control. His vision? A future where data rules, privacy dies, and power is centralized in the hands of a few. Peter Thiel isn’t just rich. He’s dangerous because he’s strategic. He’s building a future where democracy is optional, surveillance is constant, and dissent is punished. Ignore him at your peril — he’s already rewriting the rules.

Alex Karp

Alex Karp is Palantir’s public face and CEO.

Background:

PhD in philosophy (not computer science)

Spent years in Europe

Comfortable talking about power, order, and authority

You can watch him in a recent shareholder briefing saying that we have ‘dedicated our company to the service of the West and the United States of America…especially in places we can’t talk about’ and ‘Palantir is here to help institutions we partner with be the best in the world…and when necessary to scare enemies and on occasion kill them…We hope you’re in favour of that…[and] are enjoying being a partner…we’re really happy and enjoying what we’re doing’:

The CIA’s involvement - not a rumor, not a theory

Palantir’s connection to the CIA is not something critics “discovered later.” It was there from the beginning.

In its early years, Palantir received funding and support from In-Q-Tel, the official venture capital arm of the Central Intelligence Agency.

In-Q-Tel does not invest like a normal VC. It exists for one reason: to identify, shape, and secure technologies useful to U.S. intelligence and national security before those tools reach the public market.

When In-Q-Tel backs a company, it means:

Intelligence agencies already see a use for the technology

The product is being built to solve intelligence problems

The company is being guided toward government adoption early

Palantir was not a startup that later “won” CIA contracts. It was developed with intelligence use in mind from the start.

What problem the CIA was trying to solve

After 9/11, U.S. intelligence agencies faced a problem they openly admitted:

They had too much data, spread across:

phone records

travel data

financial transactions

surveillance reports

human intelligence notes

The failure, they said, was not lack of information — it was lack of integration. Palantir’s job was to fix that.

Its software allowed analysts to:

merge disconnected databases

map relationships between people, places, and events

spot patterns humans would miss

flag individuals and networks faster

In short: turn data into actionable suspicion.

Gotham: for militaries, police, and intelligence agencies. Think of it as Google for spies. It can:

Map terrorist networks

Track targets in real time

Uncover hidden relationships

Palantir is often described as:

“A private tech company that happens to work with governments.”

That is misleading.

Palantir grew inside the intelligence ecosystem. Its first major users were not businesses or hospitals — they were spies, analysts, and security agencies.

The company learned early how to:

operate behind secrecy

avoid public scrutiny

frame its work as “necessary”

treat oversight as an obstacle, not a safeguard

Those habits stayed with it as it expanded into policing, immigration, health, and corporate systems.

Most tech companies need public trust to survive. Palantir does not. It doesn’t need users to love it. It needs institutions with power to depend on it. That dependency, once built, is very hard to undo.

And that is why Palantir rarely advertises, rarely explains itself clearly, and rarely appears in mainstream debates about surveillance. By the time the public notices, the system is already in place.

The UK health data scandal

During the COVID-19, Palantir Technologies was brought in to help run the NHS COVID-19 data store in the UK. Officials described it as an emergency response: a temporary system to help manage hospital capacity, ventilators, and resources during a national crisis.

What the public was not clearly told at the time was how much power that arrangement quietly handed over.

Under the original contracts and data-sharing terms, private companies working on the NHS COVID-19 datastore were allowed to access and use massive amounts of sensitive health data — including records tied to real patients. Legal challenges later revealed that these companies could potentially use the data to develop and train AI systems, systems that could later be commercialized and sold for profit.

In simple terms: private firms were positioned to turn public health records into private assets.

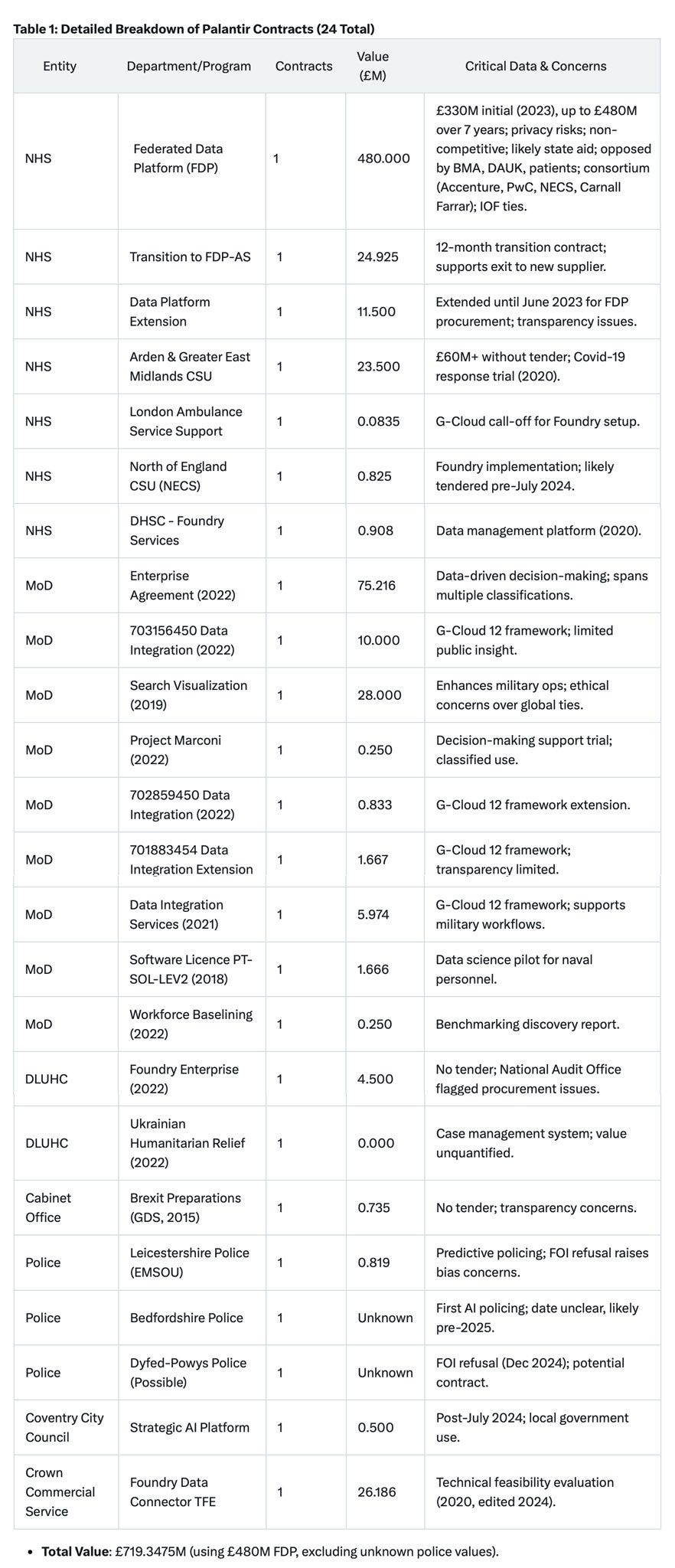

Palantir has 24 contracts with key UK public institutions, including the NHS, the Ministry of Defence, the police forces, the Cabinet Office, the DLUHC, and Coventry City Council.

Predictive policing — when software decides who looks suspicious

One of the most controversial uses of Palantir Technologies software has been in predictive policing.

Using platforms like Palantir Gotham and Metropolis, police departments were able to combine arrest records, field interviews, social connections, phone data, and location history into single profiles. The software then flagged people as potential “risks” — not because of a crime they committed, but because of patterns, associations, and probabilities.

In practice, this meant:

You could be flagged because of whoa few people you know

Because you live in a certain area

Because an algorithm decided your behavior resembled past crime data

There was no clear explanation given to those flagged. No way to challenge the label. No requirement to prove wrongdoing.

Los Angeles and New Orleans — real examples, real secrecy

In Los Angeles, Palantir tools were used by the LAPD to analyze large volumes of policing data and generate leads. Civil rights groups later warned that the system reinforced existing bias by recycling historical arrest data — meaning past discrimination became future prediction.

But New Orleans revealed something even more serious.

Between 2012 and 2018, the New Orleans Police Department secretly deployed Palantir’s predictive policing software without public knowledge or approval. The system used data-mining algorithms to identify people labeled as potential crime risks by analyzing personal data such as criminal records, social media activity, and alleged gang affiliations. Individuals were flagged not for what they had done, but for how the software interpreted their associations and behavior.

The bigger agenda

Seen on its own, Palantir looks like just another data company. Seen in context, it fits into something much larger.

Across governments and financial systems, the same direction keeps appearing: centralized identity, centralized data, and centralized control.

Programs like REAL ID, expanding digital ID systems, and the push toward CBDCs and regulated stablecoins all move society toward a single structure where identity, money, and behavior are tightly linked.

The logic is simple:

A centralized digital ID defines who you are

Programmable money defines what you can do

Data analytics decides whether your behavior is acceptable

Why companies like Palantir matter here

Systems like this don’t work without powerful data platforms.

Someone has to:

integrate identity data

track behavior across systems

flag “risk” or “non-compliance”

turn policy into automated decisions

That is exactly what Palantir specializes in. This doesn’t mean Palantir controls these systems alone. It means it provides the infrastructure logic that makes them possible. Once built, these systems don’t need constant political justification. They run quietly in the background.

Why the public rarely hears about Palantir

Most powerful companies need public attention to grow. Palantir is the opposite.

It doesn’t sell products to ordinary people. It doesn’t rely on brand loyalty, downloads, or public approval. Its customers are governments, militaries, police forces, and large institutions - organizations that operate behind closed doors by default. Because of that, Palantir has no reason to explain itself to the public.

Its contracts are often buried in procurement documents, framed as “data integration” or “operational support.” Its work is described in technical language that sounds boring and harmless. And when people raise concerns, the response is usually the same: national security, confidentiality, or complexity.

There is also another reason Palantir stays out of headlines.

It doesn’t act alone. It embeds itself inside existing institutions, becoming part of how decisions are made rather than a visible decision-maker. When harm occurs - wrongful surveillance, biased targeting, data misuse - responsibility is diffused. Officials blame the system. The system blames the data. And the company stays in the background.

Unlike social media platforms, Palantir doesn’t provoke public outrage because most people never interact with it directly. You don’t log into Palantir. You don’t agree to its terms of service. You only feel its effects indirectly - when you’re flagged, delayed, questioned, denied, or quietly monitored.

Power that doesn’t need your attention is the hardest power to challenge.

That is why Palantir Technologies can shape public life so deeply while remaining largely unknown. The less visible the system, the fewer people ask who built it and why.

For those who have seen the famously dystopian movie Minority Report, Palantir is the company that is most likely to make this kind of scene a reality in the near future:

Conclusion

Palantir is not dangerous because it is secret. It is dangerous because it is normalized.

At every stage, its expansion has been justified as necessary, temporary, or technical. Emergency responses become permanent infrastructure. Surveillance becomes “data integration.” Prediction replaces evidence. And accountability dissolves into systems no one person controls and no one person can be questioned.

This is not about one company breaking the law. It is about a model of power that no longer needs public consent to function. When identity, behavior, and access are mediated through data systems, control does not have to announce itself. It simply operates.

You do not need to believe in conspiracies to see the pattern. It is visible in policing, in health data, in national security, and in the quiet merging of identity and money into programmable systems. None of this requires dictatorship. It only requires complexity, outsourcing, and silence.

The most effective forms of control are not enforced with force. They are enforced with software.

By the time people ask whether this is the future they agreed to, the system will already be in place - efficient, automated, and very difficult to undo.

The question is no longer whether this kind of power could be abused. The question is who gets to decide when prediction becomes punishment and why that decision was never ours to make.

Author’s note: If you find value in what I write - if it supports your research, challenges your assumptions, or helps you see things most people never question - I want you to know that it truly matters to me.

Revealed Eye is my full-time, independent work. This platform allows me to stay free from corporate influence, sponsors, and filters and to focus entirely on researching and writing.

If you’d like to support this work and help keep Revealed Eye, consider becoming a paid subscriber. Your support makes it possible for me to keep digging deeper, sharing in-depth investigations, and publishing exclusive content.

To see how readers have benefited from this publication and the community it has built, you can view their feedback here.

Surely we own our own data and identity and if so we should be able to get it deleted, right? If not then we are slaves.

After 9/11 Peter Thiel wanted to find “how to give governments more power to detect threats before they happen, without public oversight getting in the way” That’s absolute bs considering it was individuals within our government that orchestrated 9/11. These manufactured crisis’ are tools to fool the masses into further control systems.